Case Study

FITIZENS

AI-powered video coach that analyzes exercise technique. Built from scratch by a two-person team.

The Product

Most fitness apps count reps. None of them can tell you your left knee is caving in on the third rep of your clean.

We founded FITIZENS in 2022 with one goal: build an AI coach that could watch you exercise and tell you what to fix. Not a rep counter. Not a pose overlay. A coach that understands biomechanics, spots technique issues, and explains them in plain language.

Over four years, we built the entire product from scratch. Two co-founders, no external funding. The mobile app, the AI video analysis agentic workflow, the serverless backend, the data infrastructure, the sensor fusion firmware, the exercise detection algorithms. All built in-house.

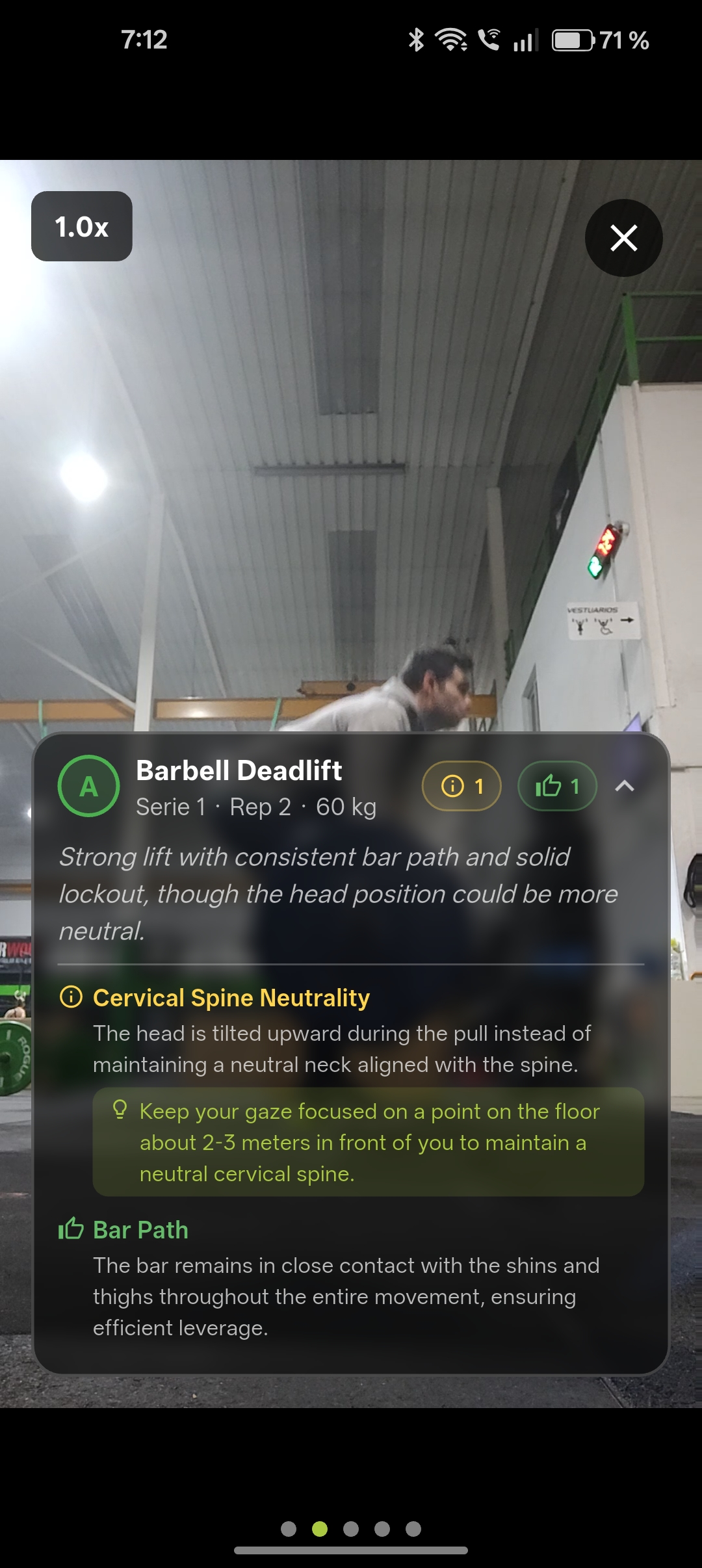

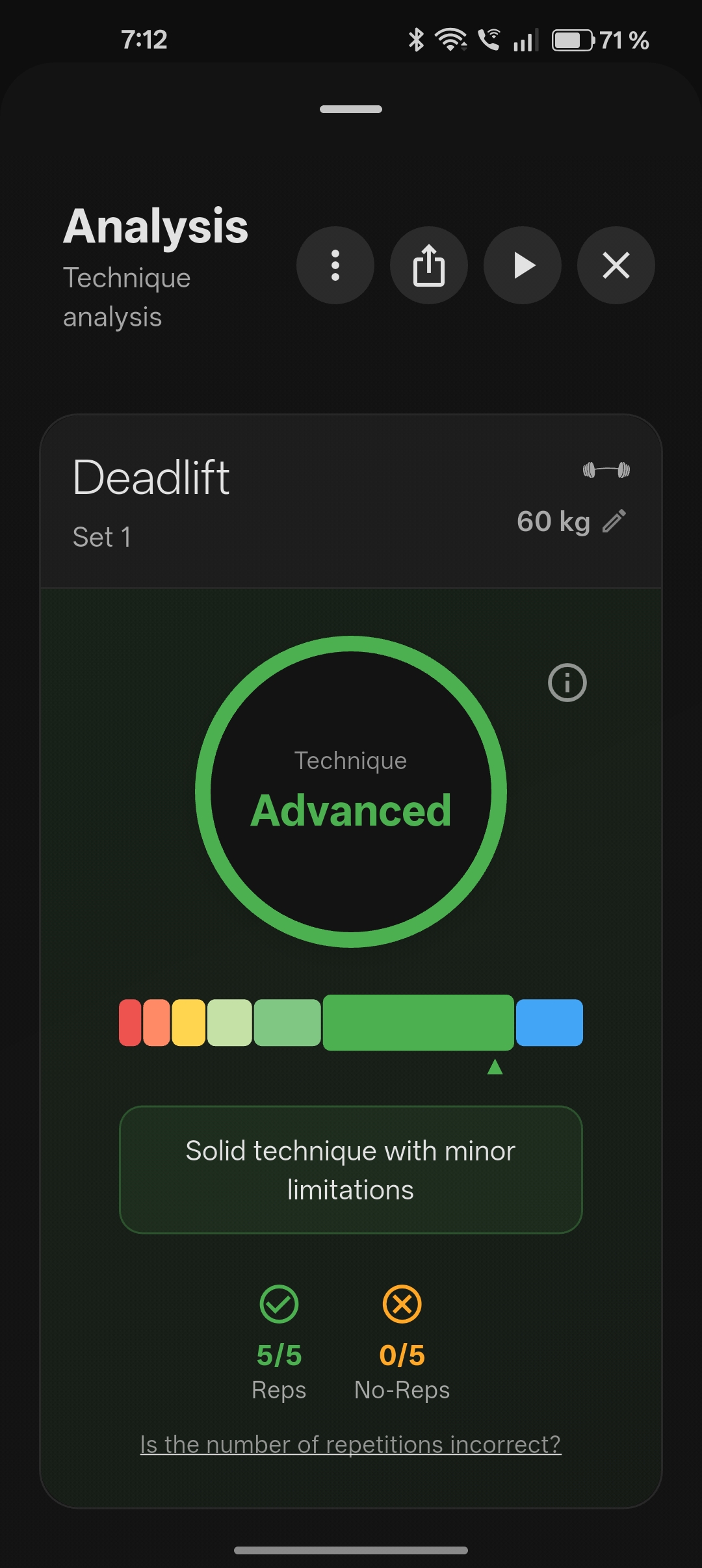

You open the app, pick an exercise, and record a video of your set. The AI watches every frame, counts your reps, scores each one, detects technique issues, and gives you specific feedback on what to improve. All from a phone camera, no extra hardware needed.

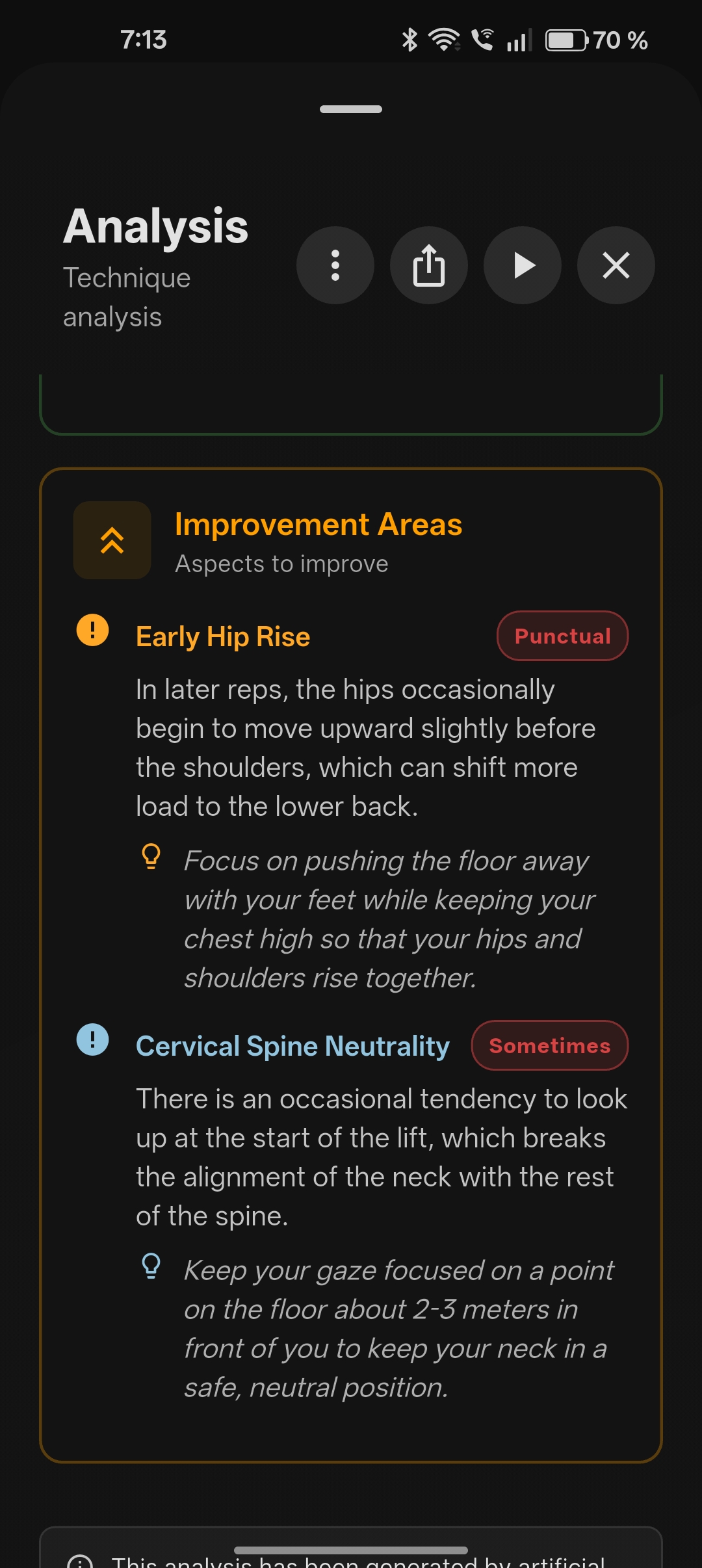

The feedback is not generic. Each exercise has its own biomechanical rubric: what good form looks like, what the common mistakes are, what makes a rep a no-rep. The AI debates itself internally before scoring, arguing both sides to reduce overconfidence.

We built a catalog of 542 exercises, each with its own rubric, muscle mappings, and quality criteria. The scoring system ranges from "Excellent" to "Unsafe", with specific coaching cues for every level.

We tested it with 200+ athletes across CrossFit boxes in Madrid, iterating based on real training sessions and real feedback. The app is live on both the App Store and Google Play.

AI analyzes every frame of each rep

Per-rep scoring with confidence levels

Specific coaching cues for each issue

The Journey

First Pivot: B2B to B2C

We started by offering gyms a way to differentiate: an app that helped their athletes stay engaged, track performance, and improve technique using wearable sensors. After dozens of conversations and multiple iterations of the product and value proposition, the pattern was clear. Gyms liked the idea but were not ready to adopt new technology at scale. So we pivoted to a consumer app that athletes could use on their own, without needing their gym to buy in.

Fitizens 1.0: sensor-based exercise detection and real-time rep counting

Second Pivot: Sensors to Camera

We had spent over a year on sensors: C++ fusion libraries, deep learning exercise detection, Bluetooth integration. It worked, but two problems kept growing. Athletes needed to understand WHY a rep was a no-rep, and sensors couldn't explain that. And the extra hardware was a barrier most consumers wouldn't accept. When LLMs became viable, we saw the answer to both. We designed an agentic workflow: AI agents that watch video, debate technique quality, and produce specific coaching feedback. No hardware, just a phone. The pivot wasn't just swapping the input source. The app went from workout tracking to technique coaching, which meant rebuilding the product, the architecture, and the entire user experience around a fundamentally different value proposition.

Each pivot preserved the domain knowledge we had accumulated while replacing the technical layer underneath. The hardest engineering you do might be the engineering you throw away.

Under the Hood

The stack covers everything from mobile to AI to infrastructure. What looks like clean boxes on a diagram hides real depth.

Cloud Backend

Python Cloud Functions on Firebase handling the full lifecycle: triggering the agentic video analysis, managing subscriptions across Stripe, Apple IAP, and Google Play, transactional emails, analytics, and syncing results back to the app. Firestore for data, Cloud Storage for video, Firebase Auth for authentication.

Mobile App

A Flutter application on iOS and Android with seven feature modules: video recording with on-device processing, AI-powered analysis, a 542-exercise catalog, session history, subscription management, onboarding, and settings. Videos are processed on-device before anything reaches the cloud.

The AI Coaching Engine

This is where the actual coaching happens. Not a single model call. An agentic workflow where multiple specialized AI agents collaborate to produce coaching feedback.

The user records a video of their exercise set. The workflow starts with rep detection: identifying where each repetition begins and ends. Then, for each rep, a technique analysis agent evaluates execution quality against the exercise's biomechanical rubric. A scoring agent debates the assessment internally, arguing both sides to reduce overconfidence before assigning a score. Finally, a feedback agent produces specific, actionable coaching cues in the user's language.

Each exercise has its own rubric: what good form looks like, what the common mistakes are, what makes a rep count and what doesn't. The scoring ranges from "Excellent" to "Unsafe", with different coaching cues at every level. These rubrics are not generic templates. They encode real biomechanical knowledge, built exercise by exercise, with input from professional coaches.

Validating the AI

How do you know your AI coach is actually good? We built a dedicated evaluation framework using 900+ real exercise videos from actual users. The agentic workflow itself starts with a set of guardrails that define what responses must include, what to avoid, and how feedback should be structured. Then multiple evaluator agents, some running in parallel and others in sequence, review each of the user's movements against the exercise rubric. The evaluation system compares AI predictions against human-annotated ground truth from expert trainers, tracking accuracy across prompt versions, model updates, and rubric changes.

The Data Moat

The real competitive advantage.

Four years of domain knowledge, captured in structured data that powers every layer of the product.

At the core, 50 JSON schemas that play a dual role. Internally, they define the data contracts for all our APIs and services. But more importantly, they serve as the guardrails for the entire agentic workflow. Every AI agent in the system is constrained by these schemas: they define what coaching feedback can contain, how scores must be structured, what constitutes a valid rep assessment, and where the boundaries are. The schemas are what keep the AI agents honest. Without them, you have a chatbot. With them, you have a coaching system.

542 exercise catalog

Bilingual descriptions, muscle group mappings, quality criteria, and biomechanical rubrics defining what good form looks like, common mistakes, and coaching cues for each exercise

900+ annotated exercise videos

Real exercise videos from actual users for LLM evaluation

40,000+ labeled repetitions

From professional trainers across 130 exercises (sensor era)

Exercise detection datasets

Raw accelerometer and gyroscope data, human-labeled in Label Studio

50 structured schemas

Agentic guardrail schemas

My Role

When there are two of you, job titles don't mean much. I led the technical architecture, backend, and payment systems. I designed the agentic video coaching workflow. I ran user research, customer conversations, and niche exploration. I built 50+ reusable development skills and 30+ AI agents to automate code generation, reviews, and architecture decisions. Dani focused on the mobile app and ML model development. But in practice, we both did everything that needed doing.

What We Learned

High NPS, but we never cracked daily habit. Athletes liked improving their technique, but that improvement didn't solve an urgent pain. It was a vitamin, not a painkiller. Conversion was the bottleneck: people wanted it, but not enough to change their routine for it.

Pivoting is part of the process. The emotional cost is real, but you get used to it. The real cost is the work. The first pivot took months of rebuilding. The second one, thanks to AI-assisted development, took weeks. Same magnitude of change, radically different execution speed.

Building 50+ development skills and 30+ AI agents was the single highest-ROI investment of the project. It turned a two-person team into something that could compete on shipping speed with funded companies.

If you are building technology that doesn't exist yet, especially if it requires external data and custom infrastructure, you probably need funding. We bootstrapped the sensor era and the lack of resources to collect enough data was an anchor that slowed us down significantly. On the other hand, that hard-won data became a real moat. With the agentic workflow pivot, development was faster with fewer resources, but the competitive moat is lighter. There is a real trade-off between speed and defensibility.

FITIZENS is four years of building, breaking, rebuilding, and learning compressed into one product. The technology changed twice. The domain expertise only grew.